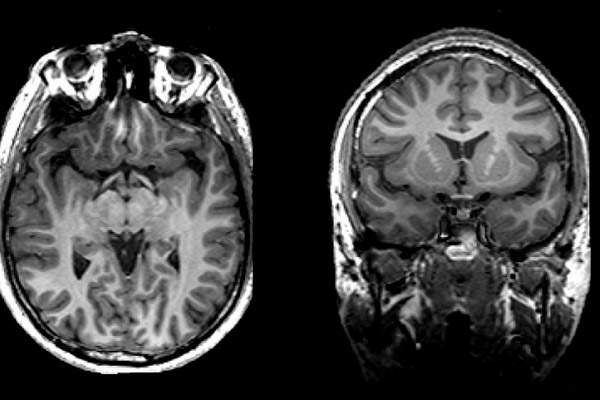

If our thoughts are actually patterns of activity in the brain, it's at least possible that neuroscientists could find a way to detect and decode them.

Thay may sound hard, even impossible, but in 2019 researchers made important progress toward this goal. They placed a grid of over 100 recording electrodes into the auditory cortex of the brains of patients that were undergoing brain surgery to treat epilepsy. With this electrode grid, they monitored electrical activity in the auditory cortex while patients listened to recordings of actors reciting a series of sentences, and the numbers from zero to nine.

Since trying to interpret the data from more than one hundred electrodes all recording at once would be hopelessly complicated, the neuroscientists used artificial intelligence to interpret the recordings.

They trained a deep learning neural network computer program to use the recordings from the patient's brain to reconstruct the content of the sentences the patients heard. Then they tested the trained neural network program by asking it to reconstruct the reading of the digits. The program was capable of reconstructing intelligible speech from the neural recordings.

While it's not exactly the same thing as reading thoughts, it's an important step in that direction.

Neuroscientists already know that the sensory portions of the cortex are also used for thinking and imagining. The technology could be useful for people, like the late physicist Stephen Hawking, who have difficulty communicating due to serious speech handicaps.

Sources And Further Reading

- Adam, D. Computer Program Converts Brain Signals to a Synthetic Voice. The Scientist, April 24, 2019.

- Akbari, H., et al. (2019). Towards reconstructing intelligible speech from the human auditory cortex. Scientific Reports. 9 (874).