Why We Probably Shouldn't Worry About A Slight Decrease In Scores On The SAT

The claim that only 43 percent of college-bound seniors are college ready is inaccurate, writes Matthew Di Carlo in his critique of the College Board’s report on SAT scores. From Shanker Blog:

The difference between “43 percent of college-bound seniors” and “43 percent of SAT takers” is not just semantic. It is critical. The former suggests that the SAT sample is representative of college-bound seniors, while the latter properly limits itself to students who happened to take the SAT this year. Without evidence that test takers are representative — which is a very complicated issue, especially given that SAT participation varies so widely by state — average U.S. SAT scores don’t reflect how “college ready” the typical college-bound senior might be.

Di Carlo makes a couple of important points for people trying to make sense of what the scores actually mean. First, a different group of students takes the test each year. Second, a one-point drop in reading scores isn’t a dramatic decline, even if it’s technically the lowest average in 40 years.

For the record, Indiana’s reading and math scores didn’t change — 493 and 501, respectively, for two years running — and students scored a point higher on the writing test for an average of 476. (All three of those scores are just shy of the national average.) About 69 percent of Indiana students take the SAT.

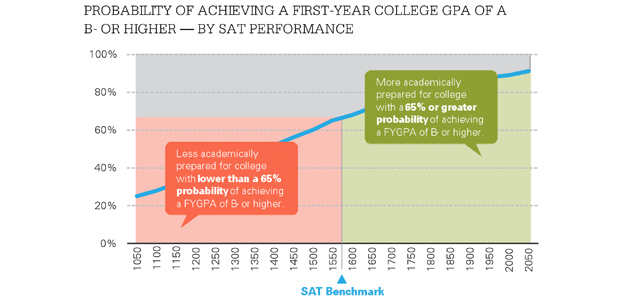

Graph courtesy of the College Board

Only about 43 percent of students from the class of 2012 who took the SAT last year received a 1550 or better, the score the College Board uses as an indicator of college preparedness.

Here’s what the College Board report can tell us: Of the students who’ve taken the SAT before, there are certain benchmark scores that can act as predictors of college success. Students who score 1550 have a 65 percent change of finishing their freshman year with a B- average or better. Forty-three percent of the class of 2012 scored 1550 or higher — hence the headline that fewer than half of college-bound seniors are prepared to continue their education.

So what can the SAT tell us about college preparedness? Di Carlo writes that the College Board avoids identifying trends in its annual report for a reason:

The annual confusion about SAT results (which has been lamented by researchers for a few decades) is, for me, exceedingly disheartening. If we as a public don’t always seem to understand that the SAT – a completely voluntary test, with ever-changing test-taker demographics – cannot be compared across years, then there may be little hope for proper interpretation of state test data, which suffer similar, but far less obvious limitations.